It’s terrible news for anyone who values history, loves Paris, or read Victor Hugo: Notre-Dame Cathedral, the Gothic gem at the historic center of Paris, is on fire. It’s obviously far too early for anything conclusive, but early suggestions from officials are that the blaze could be related the ongoing renovations to the roof. There’s no indication at this writing that it’s a terror attack or related in any way to a terrorist group.

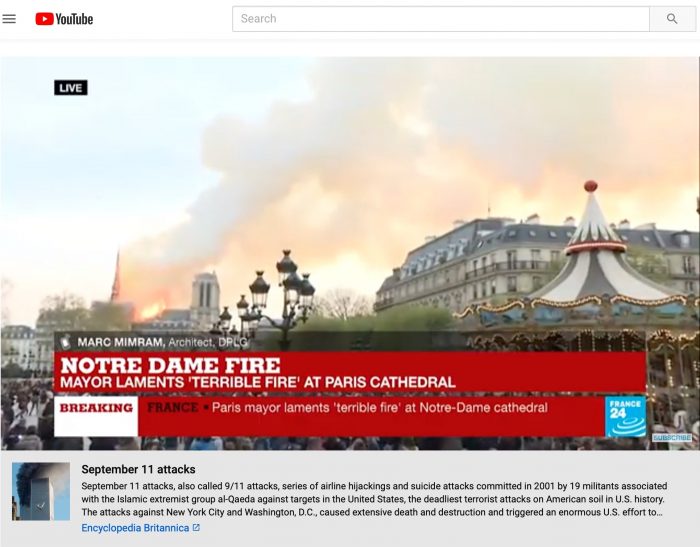

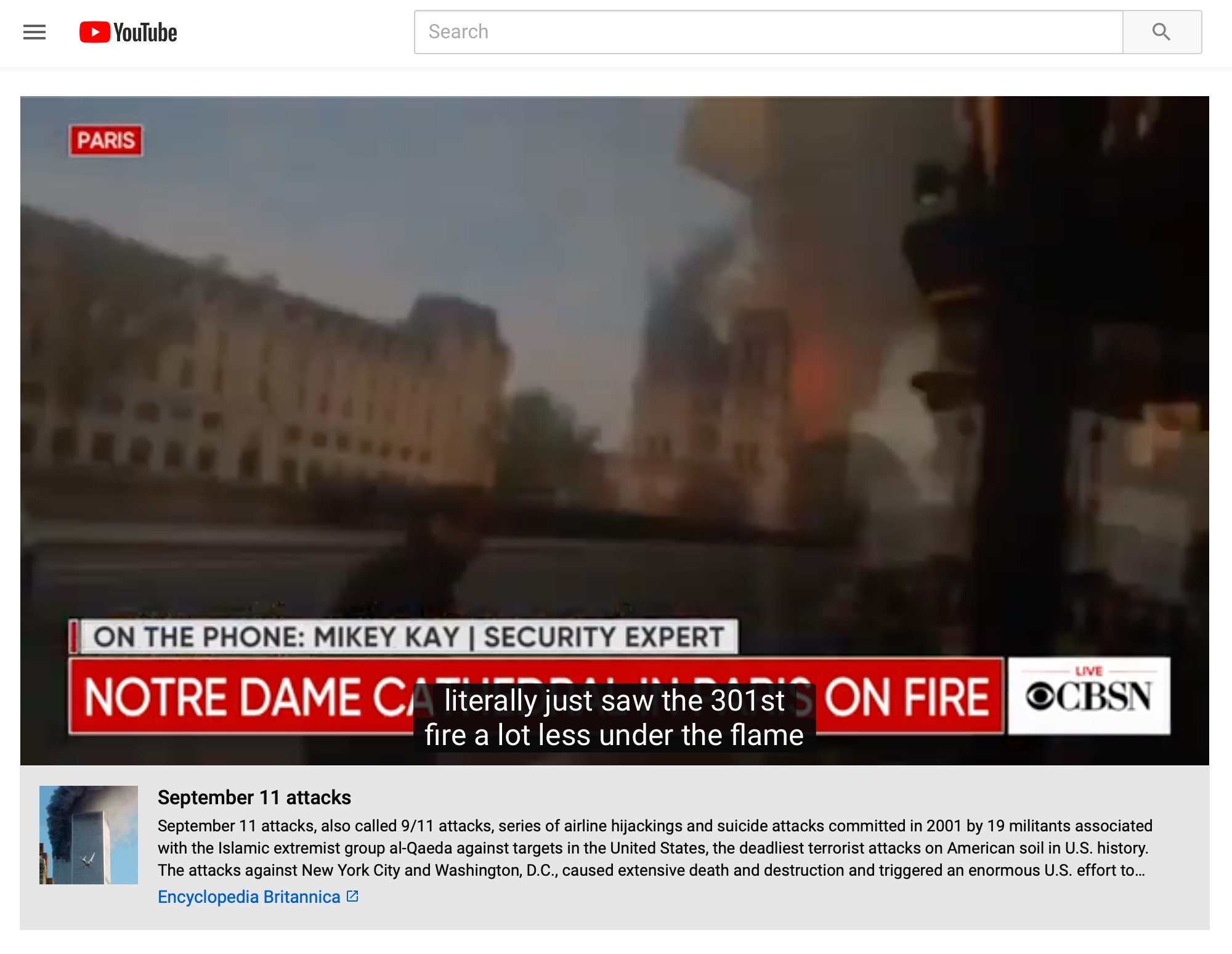

So as people turned to YouTube to see live streams from trusted news organizations of the fire in progress, why was YouTube showing them background information about 9/11?

I first noticed this when I went to France24, which produces an English-language feed, and saw the unusual box.

Why in the world is @YouTube putting information about 9/11 underneath the Notre Dame livestream from @FRANCE24?

(Especially since it seems like, at least right now, ongoing renovations are the most likely cause, no indication of terror) https://t.co/A3HP36epxx pic.twitter.com/ZheCMC5pcG

— Joshua Benton (@jbenton) April 15, 2019

Looking around, along with France24, I also saw the 9/11 info on the streams of CBS News and NBC News.

Why is YouTube adding information to these videos that seems tailor-made to make people think it’s a terror attack? I asked Google and got this statement from a spokesperson:

We are deeply saddened by the ongoing fire at the Notre Dame cathedral. Last year, we launched information panels with links to third party sources like Encyclopedia Britannica and Wikipedia for subjects subject to misinformation. These panels are triggered algorithmically and our systems sometimes make the wrong call. We are disabling these panels for live streams related to the fire.

You may remember those information panels from when they were announced at SXSW last year, with CEO Susan Wojcicki saying that:

…when there are videos that are focused around something that’s a conspiracy — and we’re using a list of well-known internet conspiracies from Wikipedia — then we will show a companion unit of information from Wikipedia showing that here is information about the event.

That well-intentioned effort faced criticism on a couple of fronts: Google’s YouTube would be freeloading on the backs of unpaid Wikipedia editors, and those info boxes (with a link to Wikipedia) risked infecting that comparatively conspiracy-resistant platform with a bunch of YouTube crazies.

YouTube has expanded that effort in a few ways over time, including showing the boxes when someone searches for conspiracy-friendly terms (even if they don’t click through to a video) and using similar methods to denote news organizations that receive government funding.

It’s unclear why a breaking news event — one about which there hasn’t been time for any substantial conspiracies to take root — got the information panel, much less a 9/11 one; Google fixed the problem less than an hour after I noticed it. But it’s a reminder that even efforts to limit misinformation can end up spreading it instead — and that human editors watching over the algorithms can be a pretty good thing, too.

UPDATE, 5:40 p.m.: A few quick followups since this story has now picked up at other sites. First, here’s a previous example of the 9/11 infobox being added to an unrelated video; KCRW’s Mario Cotto noted that some old footage of New York City from 1976 got tagged with it:

check out this cool engineering. can’t watch some old super 8mm footage of nyc in 1976 without being reminded of 9/11 and there’s no way to make it go away. but the disclaimer is priceless. pic.twitter.com/qt6VO73dvt

— grittysignedbible (@mariocotto) April 10, 2019

The title and description of that video doesn’t mention anything more 9/11-related than “New York” — no mention of the World Trade Center, for instance. (The infobox has since been removed.)

Then there’s this from CUNY’s Luke Waltzer: a video of his father Ken’s retirement from Michigan State, which somehow got labeled with a “Jew” infobox:

seen on a video of my father last fall, since removed. pic.twitter.com/O9nM1U1uAn

— Luke Waltzer (@lwaltzer) April 15, 2019

Waltzer used to head the Jewish Studies program at Michigan State, but again nothing in the title or description mentions anything Jewish.

Google’s official description of the infobox program says that it places the boxes “alongside videos on a small number of well-established historical and scientific topics that have often been subject to misinformation online, like the moon landing…This information panel will appear alongside videos related to the topic, regardless of the opinions or perspectives expressed in the videos.” I guess the algorithm it’s using considered a video about a Jewish man retiring to be sufficiently about the topic of “Jew” to merit the box, just as it considered random 40-year-old footage of New York to be “related” to 9/11.

In other words, it isn’t just that the algorithm sometimes completely misses the boat, like confusing Notre-Dame and the World Trade Center. Even when it’s not making a big categorization error, it can still be putting up very inappropriate “information.”

Some other examples: A video of a launch of the Falcon Heavy rocket got labeled with 9/11 — presumably because it showed two towers and a lot of smoke?

@YouTube @Google Your CV algorithm for the informational panel from encyclopedia Britannica thinks the Falcon Heavy Launch looks like 9/11. Please fix asap. pic.twitter.com/vvXpoPp2pV

— Scott (@swilcox26) April 10, 2019

9/11 also got attached to a video stream promising “College Music · 24/7 Live Radio · Study Music · Chill Music · Calming Music”:

@YouTube, please tell me how to get rid of this depressing Encyclopedia Britannica information. I get it, you're trying to stop misinformation about important topics but I believe in facts and science so please help me here. pic.twitter.com/J3t2pLHZIM

— Beatrix Kiddo (@MsBeatrixKiddo) March 11, 2019

Same for a video of a random fire in San Francisco:

Sorry @youtube, this is some poor placement! 🤖🤡@Britannica @KPIXtv @YouTubeInsider pic.twitter.com/rbAfFqpw4d

— Peter Habicht (@habicht) February 7, 2019

A couple other thoughts: Mike Caulfield rightly notes that simply linking to accurate information isn’t the best way to battle a conspiracy theory.

One repeated criticism I've had of panels is in linking to *positive* information (article on Sept 11 attacks) instead of *negative* information (article on 9/11 conspiracy theories and why they are bunk) they force the user to make (often wrong) guesses as to why it's there. https://t.co/10pGY82Rai

— Mike Caulfield (@holden) April 15, 2019

Bassey Etim says that while human monitoring of every topic on YouTube is obviously impractical, there’s no reason it couldn’t use humans on a first pass for this sort of stuff on the most important stories — especially the big breaking ones.

That every aspect of the top 100 global stories isn't manually curated 24/7 by @YouTube is absolutely baffling.

This is extremely low hanging fruit, people! https://t.co/ozXMsqRB12

— Bassey Etim (@BasseyE) April 15, 2019

(Etim used to lead content moderation at The New York Times, so he knows the value of giving humans oversight over a small subset of the most important information judgment calls, while letting algorithms handle the rest.)