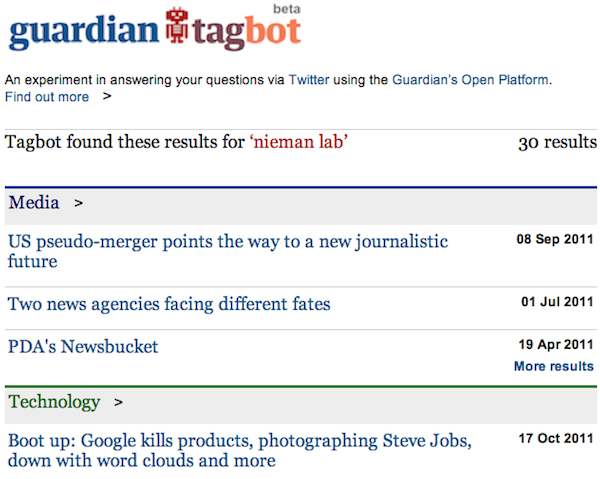

So robots are one step closer to world domination. Or at least to info domination. This morning, the Guardian announced the birth of @GuardianTagBot, the living, tweeting, occasionally sleeping Twitter account that serves as the public face of the Guardian’s content API explorer. Tweet @GuardianTagBot with a search term — or a whole group of search terms — and it’ll @-reply you with a link to Guardian content that matches your query. Whether you’re looking for Nieman Lab mentions in the Guardian (who isn’t?), or wondering what Nick Clegg is up today (ditto), or concerned that David Cameron may be a lizard (um)…the bot probably has your answer.

“It’s rather like playing fetch with our articles, videos, galleries and audio,” Nina Lovelace, the Guardian’s content development manager, explained in a post announcing the tool. While returns are ad hoc — you have to re-ask @GuardianTagBot each time you want updated search results — if you save a search for GuardianTagBot, Meg Pickard, the Guardian’s head of digital engagement, points out, you can see results in real time, as well.

Again: world domination. Siri-ously.

The TagBot was developed in collaboration with the social media agency Smesh. When I asked Lovelace for more detail about TagBot’s interface, she replied in an email that the tool:

has been built by Smesh developers, who have implemented a kind of ‘Twitter bridge’ between Twitter and the Guardian’s content API. Smesh have built on their existing infrastructure for realtime tweet-wrangling to capture all incoming tweets @GuardianTagbot via Twitter’s streaming API. Smesh’s software then performs some semantic analysis on the tweets, cross-referencing against a customised version of the Guardian’s tagging database to find likely matches. Smesh then formulates a query against the Guardian content API, performs some additional processing on the results, and builds a dynamic results page to tweet back to the user. Hopefully with excellent quality content matches in!

TagBot is, as a robo-infant, a fragile creature; at this point, it can process only 3,000 queries a day. “The current primary limitation is around the degree of semantic analysis that it’s been possible to deliver for a fast beta build,” Lovelace told me. The 3,000-response limitation is based on Twitter’s API limits; the system itself, she said, “is lightweight, fast and scalable” — and “we hope to see the response limit raised or removed if the project continues past its beta phase, which will last a month.” (To account for any service interruptions, the Guardian has created a shadow account for @GuardianTagBot that can answer bot-related questions when TagBot tires out. It’s a telling one: @TagBotsHuman.)

TagBot is cleverly dual-purpose: On the one hand, it’s a useful (and, given the bot’s cheeky anthropomorphism, fun) service for Guardian users — one that, given its Guardian-content-only query returns, has the nice side effect of encouraging pageview-friendly, brand-centric site navigation. But the even-more-interesting innovation is the “please rate me!” request you’ll see below the bot’s search returns — one that asks you to tell TagBot whether it’s been “a good Bot” or “a bad Bot.” (Again: cheeky.) Data culled from @GuardianTagBot searches, Pickard told me, will help the Guardian’s tech editorial team to refine the site’s tag taxonomy — ostensibly both by learning what popular tags might be missing (“shed” has been one weird example) and by collecting semantic search data from users.

“TagBot will definitely make some mistakes,” Lovelace notes in her post, “but it’s here to help us check how well our tagging system is working.”

Essentially, The Guardian is crowdsourcing part of its tag taxonomy, looking to users to help it determine the tags, terms, and overall infrastructure that will best help it do the increasingly crucial job of site organization. If @GuardianTagBot catches on with users, it should provide an valuable — and, yet, basically cost-free — dataset for the Guardian’s tech team. Even after just a few hours of life, Lovelace told me, TagBot “is already showing us how our tagging system could be improved to better suit users.”

TagBot will also allow the Guardian, Lovelace notes, to test whether, in the future, the paper could provide a service that would allow users to sign up for tag-based content updates delivered through social media. (Maybe something like Google Alerts for Guardian content, delivered through Twitter.) Lovelace, true to the Guardian’s open interface, is looking for thoughts on that: “It would be great to get feedback,” she told me, “on how users might want this developed in future to tagbot@guardian.co.uk.”

TagBot, as a component of the paper’s digital-first move, is part of a larger effort at the Guardian to demonstrate — to users, to developers, and, significantly, to potential commercial partners — the cool things that can be done with the Guardian’s API. (“For example,” Lovelace says, “we are able to build similar services for clients if they’d like us to, using our great content, on different social networks and beyond.”) It’s yet another way for people to start thinking of the Guardian less as a newspaper, and more as a data platform. “Both the Guardian and Smesh are excited about potential for developing the idea further,” Lovelace says. “We have some great ideas, so watch this space.”