The growing stream of reporting on and data about fake news, misinformation, partisan content, and news literacy is hard to keep up with. This weekly roundup offers the highlights of what you might have missed.

“新闻软文,” or “News-style soft article.” Want to discredit a journalist? That’ll be $55,000. 100,000 real people’s signatures on a Change.org petition? $6,000. And those Chinese “soft articles” can be gotten for as little as USD $15. The folks at security software company Trend Micro studied Chinese, Russian, Arabic/Middle Eastern, and English marketplaces and found that “everything from social media promotions, creation of fake comments, and even online vote manipulation [is] sold at very reasonable prices. Surprisingly, we found that fake news campaigns aren’t always the handiwork of autonomous bots, but can also be carried out by real people via large, crowdsourcing programs.”

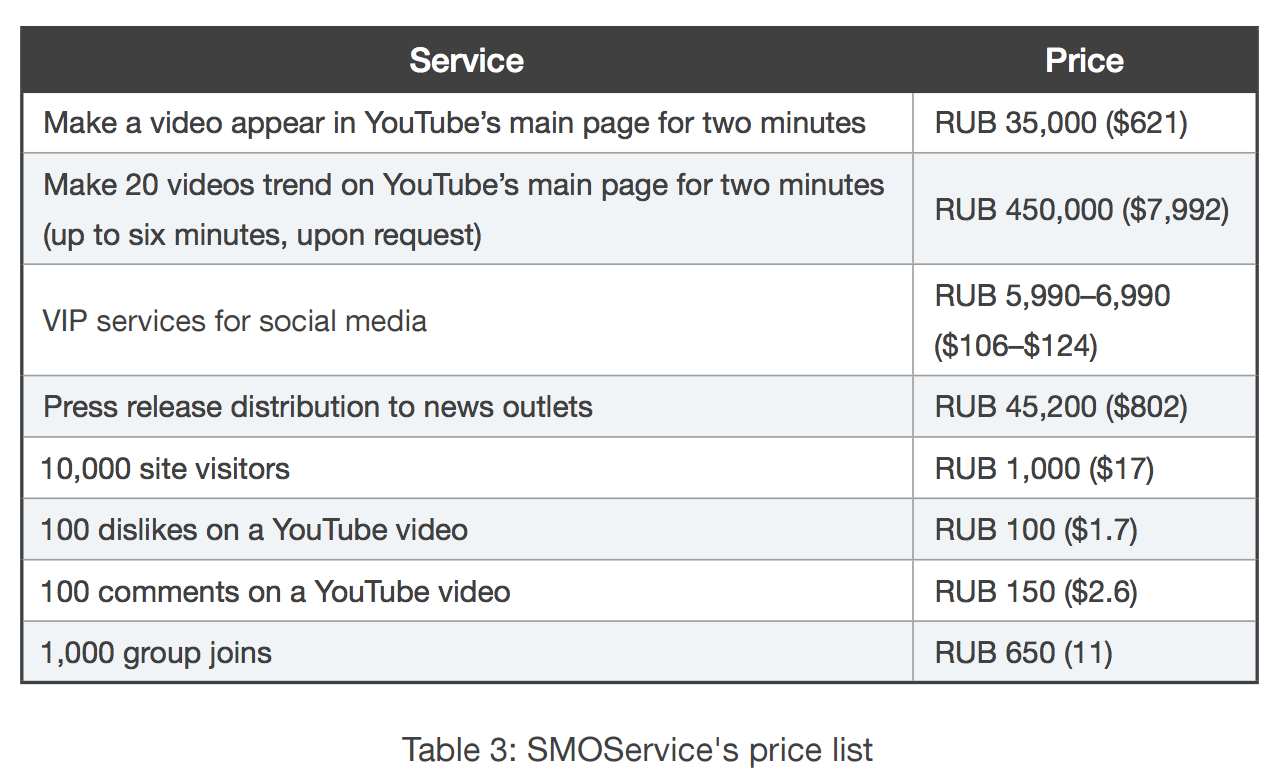

The report, “The fake news machine: How propagandists abuse the internet and manipulate the public,” is impressively detailed and researched, featuring price lists in multiple languages and case studies from several countries. Below is the list of offerings from Russian forum SMOService, which “offers a wide range of services that can also come with bulk discounts (up to 55%) and all-in-one VIP bundles. Some of its notable features include populating groups with live accounts and bots, support for more platforms (Telegram, Periscope, and MoiMir), friend requests, dislikes, making a video trend on YouTube, hidden services for VIP users, geolocation-specific services, and 24/7 customer support.”

“By now it should be very clear that social media has very strong effects on the real world,” the paper’s authors write. “It can no longer be dismissed as ‘things that happen on the internet.’ What goes on inside Facebook, Twitter, and other social media platforms can change the course of nations.”

Can’t we just let the robots handle this? (Sorry, no.) Fake News Challenge, “a grassroots effort of over 100 volunteers and 71 teams from academia and industry around the world,” seeks to “explore how artificial intelligence technologies, particularly machine learning and natural language processing, might be leveraged to combat the fake news problem.” (Advisors include Poynter’s Alexios Mantzarlis and First Draft News’ Claire Wardle.) For the Challenge’s first contest, teams were invited to focus on stance detection, which “involves estimating the relative perspective (or stance) of two pieces of text relative to a topic, claim or issue” and “could serve as a useful building block in an AI-assisted fact-checking pipeline.” Here are the winners. Here’s more from Tom Simonite at Wired:

“A lot of the work of fact-checkers and journalists tracking fake news is manual, and I hope we can change that,” says Delip Rao, an organizer of the Fake News Challenge, and founder of Joostware, which builds machine learning systems. “If you catch a fake news item in the first few hours you have a chance to prevent it from spreading, but after 24 hours it becomes difficult to contain.”

Fake News Challenge plans to announce more contests in coming months. One option for the next one is asking people to make code that can screen images with overlaid text. That format has been adopted by some people who set up fake news sites to harvest ad dollars after new controls were introduced by Google and Facebook, says Rao.

Facebook wants you to email it with your “hard questions.” Facebook is “starting a new effort to talk more openly about some complex subjects,” which so far seems to mean emailing hardquestions@fb.com with “input.” The company says it will explore questions like “Who gets to define what’s false news — and what’s simply controversial political speech?” and “Is social media good for democracy?” On the one hand, this feels highly mockable; on the other hand, note Poynter’s Alexios Mantzarlis’s suggestion last week that one way to combat the “partisan divide in the reception of fact checking” (i.e., a lot of conservatives don’t trust fact checkers) might be “establishing reader panels that could provide interesting data,” so perhaps it’s worth a shot?