But the majority of visual misinformation that people are exposed to involves much simpler forms of deception. One common technique involves recycling legitimate old photographs and videos and presenting them as evidence of recent events.

For example, Turning Point USA, a conservative group with over 1.5 million followers on Facebook, posted a photo of a ransacked grocery store with the caption “YUP! #SocialismSucks.” In reality, the empty supermarket shelves have nothing to do with socialism; the photo was taken in Japan after a major earthquake in 2011.

In another instance, after a global warming protest in London’s Hyde Park in 2019, photos began circulating as proof that the protesters had left the area covered in trash. In reality, some of the photos were from Mumbai, India, and others came from a completely different event in the park.

I’m a cognitive psychologist who studies how people learn correct and incorrect information from the world around them. Psychological research demonstrates that these out-of-context photographs can be a particularly potent form of misinformation. And unlike deepfakes, they are incredibly simple to create.

Out-of-context photos are very common source of misinformation. In the day after the January Iranian attack on U.S. military bases in Iraq, reporter Jane Lytvynenko at BuzzFeed News documented numerous instances of old photos or videos being presented as evidence of the attack on social media. These included photos from a 2017 military strike by Iran in Syria, video of Russian training exercises from 2014 and even footage from a video game. In fact, out of the 22 false rumors documented in the article, 12 involve this kind of out-of-context photos or video.

This form of misinformation can be particularly dangerous because images are a powerful tool for swaying popular opinion and promoting false beliefs. Psychological research has shown that people are more likely to believe true and false trivia statements, such as “turtles are deaf,” when they’re presented alongside an image. In addition, people are more likely to claim they’ve previously seen freshly made-up headlines when they’re accompanied by a photograph. Photos also increase the numbers of likes and shares that a post receives in a simulated social media environment, along with people’s beliefs that the post is true.

And pictures can alter what people remember from the news. In an experiment, one group of people read a news article about a hurricane accompanied by a photograph of a village after the storm. They were more likely to falsely remember that there were deaths and serious injuries compared to people who instead saw a photo of the village before the hurricane strike. This suggests that the false pictures of the Jan. 2020 Iranian attack may have affected people’s memory for details of the event.

There are a number of reasons photographs likely increase your belief in statements. First, you’re used to photographs being used for photojournalism and serving as proof that an event happened. Second, seeing a photograph can help you more quickly retrieve related information from memory. People tend to use this ease of retrieval as a signal that information is true.

Photographs also make it more easy to imagine an event happening, which can make it feel more true.

Finally, pictures simply capture your attention. A 2015 study by Adobe found that posts that included images received more than three times the Facebook interactions than posts with just text.

Journalists, researchers and technologists have begun working on this problem. The News Provenance Project, a collaboration between The New York Times and IBM, released a proof-of-concept strategy for how images could be labeled to include more information about their age, location where taken and original publisher. This simple check could help prevent old images from being used to support false information about recent events.In addition, social media companies such as Facebook, Reddit, and Twitter could begin to label photographs with information about when they were first published on the platform.

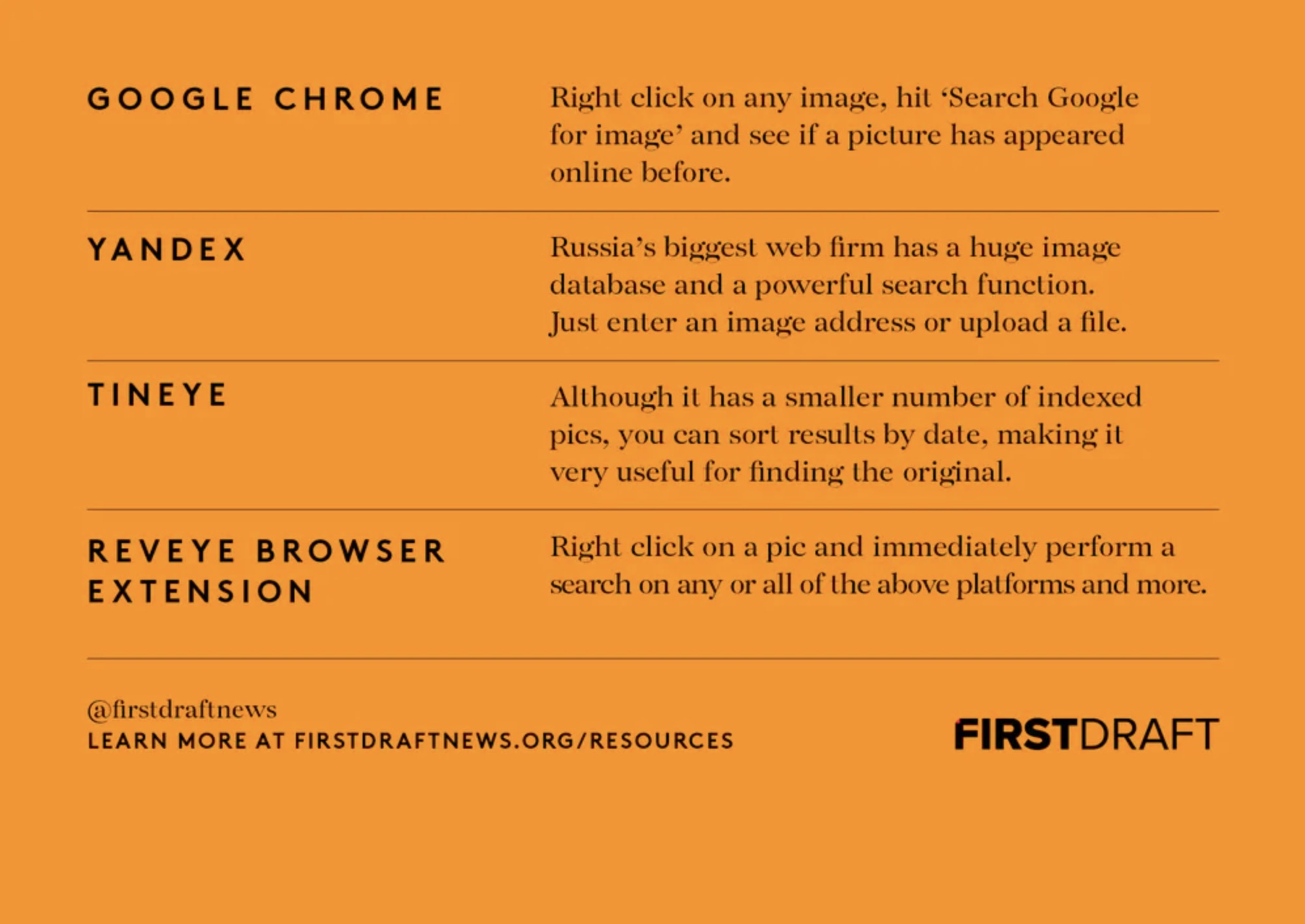

Until these kinds of solutions are implemented, though, readers are left on their own. One of the best techniques to protect yourself from misinformation, especially during a breaking news event, is to use a reverse image search. In Google Chrome, it’s as simple as right-clicking on a photograph and choosing “Search Google for image.” You’ll then see a list of all the other places that photograph has appeared online.

As consumers and users of social media, we have a responsibility for ensuring that information we share is accurate and informative. By keeping an eye out for out-of-context photographs, you can help keep misinformation in check.

Lisa Fazio is an assistant professor of psychology at Vanderbilt University. This article is republished from The Conversation under a Creative Commons license.![]()