Importantly, YouTube also says it will make “authoritative sources readily accessible,” adding text-based news article snippets to search results during developing events. A Wired piece pointed out a potential problem with those “authoritative” sources:

In the coming weeks, YouTube will start to display an information panel above videos about developing stories, which will include a link to an article that Google News deems to be most relevant and authoritative on the subject. The move is meant to help prevent hastily recorded hoax videos from rising to the top of YouTube’s recommendations. And yet, Google News hardly has a spotless record when it comes to promoting authoritative content. Following the 2016 election, the tool surfaced a WordPress blog falsely claiming Donald Trump won the popular vote as one of the top results for the term “final election results.”

YouTube is announcing a slew of changes it hopes will elevate "authoritative" news sources during breaking news events. But determining who is and isn't authoritative isn't so easy in a divided media environment, let alone a global one https://t.co/XG6TTw5JkK

— issie lapowsky (@issielapowsky) July 9, 2018

Good news for broadcasters like @bbcnews but definition of “authoritative” will be controversial https://t.co/EW8eK1HwAu

— Mark Frankel (@markfrankel29) July 10, 2018

YouTube will also provide links to more information on a “small number of well-established” topics, and won’t just lean on Wikipedia for those.

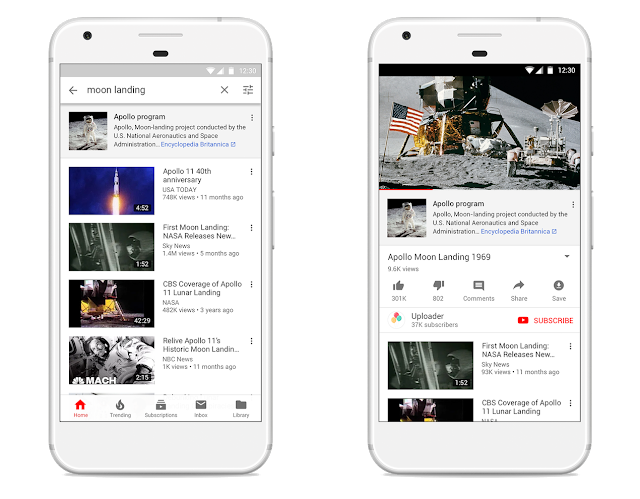

Starting [Monday], users will begin seeing information from third parties, including Wikipedia and Encyclopædia Britannica, alongside videos on a small number of well-established historical and scientific topics that have often been subject to misinformation, like the moon landing and the Oklahoma City Bombing.

As of midday Tuesday, I didn’t see these links out to third-party sources yet, but here’s YouTube’s illustration of what would come up when someone searches for “Moon Landing”:

YouTube also said it’s committed to hiring more people who will directly work with news organizations, and it’s convening a working group of representatives from news organizations to help surface issues and develop features (Vox Media, Brazil’s Jovem Pan, and India Today are cited as members of the group).

The company’s full announcement is here.

Leave a comment